Dive into the realm of Deep Learning, unlocking hidden data secrets and paving the way for groundbreaking discoveries in the intelligent future.

In today’s world, one term that is making a lot of buzz is “Deep Learning.” It is essentially the backbone of the artificial intelligence revolution that we are currently witnessing. In simple terms, deep learning is a form of machine learning that allows machines to learn and improve from their own experience without human intervention.

Read more: How to Use Synthesia For Free? Easy-to-Follow Guide

To understand how deep learning works and its importance, let’s take a closer look at its components, learning process, and applications.

1. Understanding Deep Learning

1.1 Definition of Deep Learning

Deep learning is a subset of machine learning and artificial intelligence that is concerned with creating algorithms inspired by the structure and function of the brain called artificial neural networks.

Deep learning has become increasingly popular in recent years due to its ability to process large amounts of data and its potential to revolutionize industries such as healthcare, finance, and transportation.

1.2 The History of Deep Learning

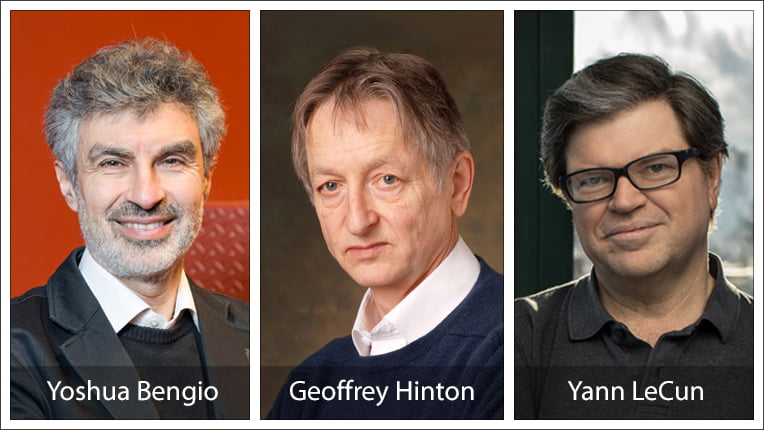

Deep learning has a rich history that dates back to the 1940s when the first neural networks were developed. However, it was not until the 1980s and 1990s that deep learning gained momentum, thanks to the work of researchers such as Geoffrey Hinton, Yann LeCun, and Yoshua Bengio.

Despite the initial promise of deep learning, progress was slow due to limited computing power and a lack of data. It was not until the mid-2000s that deep learning began to gain traction, thanks to the availability of large datasets and advancements in computing power.

Today, deep learning is a rapidly growing field with a wide range of applications, from image and speech recognition to natural language processing and autonomous vehicles.

1.3 Differences Between Deep Learning and Traditional Machine Learning

The main difference between deep learning and traditional machine learning is that deep learning can automatically learn and improve from its own experience, whereas traditional machine learning requires human intervention. This means that deep learning models can continue to improve over time, making them ideal for tasks such as image and speech recognition.

Also read: 8 Useful AI Prompts to Master ChatGPT

Another key difference between deep learning and traditional machine learning is the type of data they can process. Traditional machine learning requires structured data, such as tables and databases, whereas deep learning models can learn from unstructured data, such as images, videos, and speech.

This makes deep learning ideal for tasks such as object recognition and natural language processing. Despite these differences, both deep learning and traditional machine learning have their strengths and weaknesses, and the choice between the two will depend on the specific task at hand.

2. Key Components of Deep Learning

Deep learning is a subset of machine learning that uses artificial neural networks to model and solve complex problems. Neural networks consist of layers of interconnected nodes that perform computations, and the output of one layer becomes the input of the next layer. Here are some key components of deep learning.

2.1 Neural Networks

Neural networks are the backbone of deep learning. They are essentially a set of algorithms inspired by the structure and function of the human brain. Neural networks can be used for a variety of tasks, such as image recognition, natural language processing, and speech recognition. They consist of layers of interconnected nodes that perform computations, and the output of one layer becomes the input of the next layer.

The input layer receives the input data, and the output layer produces the output prediction. The layers in between are called hidden layers, and they perform computations on the input data to transform it into a form that is useful for the output layer.

Neural networks can be trained using a process called backpropagation, which adjusts the weights and biases of the nodes in the network to minimize the difference between the predicted output and the actual output. This process involves calculating the gradient of the loss function with respect to the weights and biases, and using this gradient to update the weights and biases using an optimization algorithm.

2.2 Activation Functions

Activation functions are mathematical functions applied to the output of a neuron that converts the output to a specific range. They introduce non-linearity into the neural network, which allows it to model complex relationships between the input and output data. Common activation functions used in deep learning include sigmoid, ReLU, and tanh functions.

The sigmoid function is a smooth curve that maps any input to a value between 0 and 1. It is used to model probabilities, and is commonly used in the output layer of a neural network for binary classification tasks.

The ReLU function stands for Rectified Linear Unit, and is defined as max (0,x). It is a piecewise linear function that is easy to compute and has been shown to work well in practice. It is commonly used in the hidden layers of a neural network.

The tanh function is a smooth curve that maps any input to a value between -1 and 1. It is similar to the sigmoid function, but is symmetric around 0. It is commonly used in the hidden layers of a neural network.

2.3 Loss Functions

Loss functions are used to calculate the difference between the predicted output and the actual output. This difference is called the loss, and the aim of training the deep learning model is to minimize the loss function.

There are many different loss functions that can be used depending on the task at hand. For example, mean squared error is commonly used for regression tasks, while binary cross-entropy is commonly used for binary classification tasks.

2.4 Optimization Algorithms

Optimization algorithms are used to adjust the parameters of the neural network during training to minimize the loss function. Common optimization algorithms used in deep learning include stochastic gradient descent, Adam, and RMSProp.

Read more: TOP 10 AI startups in 2023 You Need to Watch (part 2)

Stochastic gradient descent is a simple optimization algorithm that updates the weights and biases of the neural network using the gradient of the loss function with respect to the weights and biases. Adam and RMSProp are more advanced optimization algorithms that use adaptive learning rates to speed up convergence.

Overall, deep learning is a powerful tool for solving complex problems that require large amounts of data and computation. By understanding the key components of deep learning, you can better understand how neural networks work and how to use them effectively for your own projects.

3. How Deep Learning Works

Deep learning is a subset of artificial intelligence that involves training neural networks to learn from large amounts of data. These networks are designed to mimic the way the human brain works, with layers of interconnected nodes that process information and make predictions.

3.1 The Learning Process

The learning process in deep learning involves feeding large amounts of labeled data into the neural network. The data is labeled to provide the network with examples of what it should be predicting. The network then adjusts its parameters during training to reduce the error between the predicted output and the actual output. This process is repeated over many iterations until the network is able to accurately predict the output for new data.

During the training process, the neural network is able to identify patterns and relationships in the data that can be used to make predictions. This is done by adjusting the weights and biases of the nodes in the network. The weights determine the strength of the connections between nodes, while the biases determine how much each node contributes to the overall output.

Once training is complete, the model is tested on new and previously unseen data to evaluate its performance. This is done to ensure that the model is able to generalize and make accurate predictions for new data.

3.2 Backpropagation

Backpropagation is a process used in deep learning to calculate the gradient of the loss function with respect to each parameter in the neural network. The gradients are then used to adjust the parameters of the network during training. This process is repeated over many iterations until the network is able to accurately predict the output for new data.

The backpropagation algorithm works by propagating the error backwards through the network, starting at the output layer and working backwards towards the input layer. This allows the network to identify which nodes are contributing the most to the error and adjust their weights and biases accordingly.

3.3 Regularization Techniques

Regularization techniques are used in deep learning to prevent overfitting, which occurs when the model performs well on the training data but poorly on new and unseen data. Overfitting can occur when the model is too complex and is able to memorize the training data instead of learning to generalize.

Common regularization techniques used in deep learning include dropout and L1/L2 regularization. Dropout is a technique that randomly drops out nodes during training to reduce the complexity of the network.

L1/L2 regularization involves adding a penalty term to the loss function to encourage the network to use smaller weights and biases. By using regularization techniques, deep learning models are able to generalize better and make more accurate predictions for new and unseen data.

4. Applications of Deep Learning

Deep learning has made significant contributions to various fields, including image recognition, natural language processing, speech recognition, and autonomous vehicles. Let’s explore these applications in more detail.

4.1 Image Recognition

Image recognition is one of the most well-known applications of deep learning. Deep learning models use convolutional neural networks to analyze and classify images. This technology has led to the development of facial recognition, object recognition, and self-driving cars.

Facial recognition technology uses deep learning models to detect and recognize faces in images and videos. It has numerous applications, such as security systems, social media platforms, and law enforcement agencies.

Also interesting topic: What is Artificial General Intelligence (AGI)? You Should Read This!

Object recognition, on the other hand, is used to identify objects in images and classify them according to their attributes. Self-driving cars use object recognition technology to identify obstacles, pedestrians, and other vehicles on the road.

4.2 Natural Language Processing

Natural language processing (NLP) is another field where deep learning has had a significant impact. NLP is the ability of machines to understand and interpret human language. Deep learning models are used in chatbots, translation, and speech recognition technology such as Siri or Alexa.

Chatbots are computer programs that use NLP to simulate human conversation. They are used in various industries, such as customer service, healthcare, and finance. Translation technology uses deep learning models to translate text from one language to another. Speech recognition technology, such as Siri or Alexa, uses deep learning models to understand and respond to voice commands.

4.3 Speech Recognition

Speech recognition technology is used in various applications, such as virtual assistants, dictation software, and speech-to-text technology. Deep learning models are trained using speech data to recognize words and phrases accurately.

This technology has made significant advancements in recent years, with virtual assistants such as Siri, Google Assistant, and Amazon Alexa becoming increasingly popular.

4.4 Autonomous Vehicles

Autonomous vehicles are the future of transportation, and deep learning is an essential part of their development. Self-driving cars rely on deep learning models to navigate roads, interpret traffic signs, and avoid collisions. These models use data from sensors, cameras, and other sources to make decisions in real-time.

The development of autonomous vehicles has the potential to revolutionize the transportation industry, reducing accidents, improving traffic flow, and reducing carbon emissions.

Bottom line

In conclusion, deep learning is a crucial component of the artificial intelligence revolution that we are witnessing today. It has the potential to transform various industries and solve some of the world’s most challenging problems.

With the availability of Big Data and advancements in computing power, deep learning is set to grow even more popular in the coming years.